Introduction

The urban road environment is rife with communication between different participants such as pedestrians, cyclists and car drivers. When crossing the road, a pedestrian would know that it is fine to go ahead when the driver behind the wheel gives a signal. This could be a flash of the headlamps or even a gesture with their arm or eyes. However, in a future where automated vehicles (AVs) will be driving in urban environments, such communication would be lacking as the driver is now a passenger and would probably not be paying much attention to the road.

Such a situation gives rise to a dangerous scenario. To mitigate this, researchers and industry have been experimenting with external human machine interfaces (eHMIs) which are found on the exterior of the AV and communicate its intentions to other road users. Examples include using LED lights, LED screens, projections on the road, robotic attachments and communicating to a pedestrian’s smartphone amongst others. With so many different approaches, it would be difficult for people to understand the many different eHMIs that would be encountered. Moreover, it could get confusing to whom the car is trying to communicate with.

In comes augmented reality technology. What if the communication arrived individually to the pedestrian through AR glasses? These wearables are expected to penetrate the mass market in the coming years with the possibility of fundamentally changing the way we experience our surroundings. This would also give the opportunity to machines to communicate to us in manner we would personally understand.

Using virtual reality simulations, I will be investigating the use of such technology to improve the communication between robotic cars and humans. This is my contribution to SHAPE-IT, the project which my fellow colleagues and I are working on to support new interactions between these machines and people. Together, we are working to prepare for the environment of tomorrow.

Aims and objectives

1). To assess whether augmented reality is a suitable technology for the development of interfaces to support pedestrian-vehicle interactions.

2). Identify user-preferred design elements for AR pedestrian-vehicle interfaces.

3). Identify which interfaces are more intuitive, and which capture the pedestrian’s attention and elicit trust.

4). Develop Virtual/Augmented Reality simulation methods to investigate the interaction between the pedestrians and the vehicle, while using the AR interfaces.

5). Compare a number of user evaluation methods, with increased ecological validity, to analyse the pedestrian-vehicle interactions, while validating the simulation methods.

Original introduction video from 2020

Results

Expert perspectives: Scoping the problem

- Invited 16 researchers to give their perspectives of automated vehicles (AVs) and the interaction with vulnerable road users (VRUs) in the future urbane environment.

- Experts were selected based on

- Aspects such as smart infrastructure, external human-machine interfaces (eHMIs) and the potential of augmented reality were addressed during the interviews.

- The experts agreed or stated:

- Fully autonomous vehicles will not be introduced in the near future.

- Smart infrastructure will have a large role and expressed a need for AV-VRU segregation but were concerned about cost and maintenance

- Advised against text-based and instructive eHMIs. Surface level anthropomorphism was also discouraged.

- AR: commended for its potential in assisting VRUs, but given the echnological challenges, its use, for the time being, seems limited to scientific experiments.

- Challenges: privacy, invasiveness, user-friendliness, technological feasibility (eg., brightness, image stability) and inclusiveness (access to the technology).

- Possibilities: holographic traffic lights and signs, the removal of irrelevant information in the world, an indicator nudging the user’s attention towards an AV, the projection of safety zones or coloured road surfaces, or a fence or barrier indicating that one should not cross.

- Most agreed that: AR could prove useful for resolving the one-to-many problem that occurs in eHMIs, or for resolving language barriers by providing person-specific feedback.

- AR should be a secondary cue to implicit communication and eHMIs in the real layer because not everyone can be expected to wear an AR device.

- 15 of the 16 researchers regarded AR to be a suitable or excellent research tool for simulating and testing eHMIs and for training in a realistic context such as by utilising augmented cars.

Design: Augmented reality interfaces for pedestrian-vehicle interactions

- A genius-based (experience-based) design method was used to create novel augmented-reality (AR) interfaces for the pedestrian-vehicle interactions.

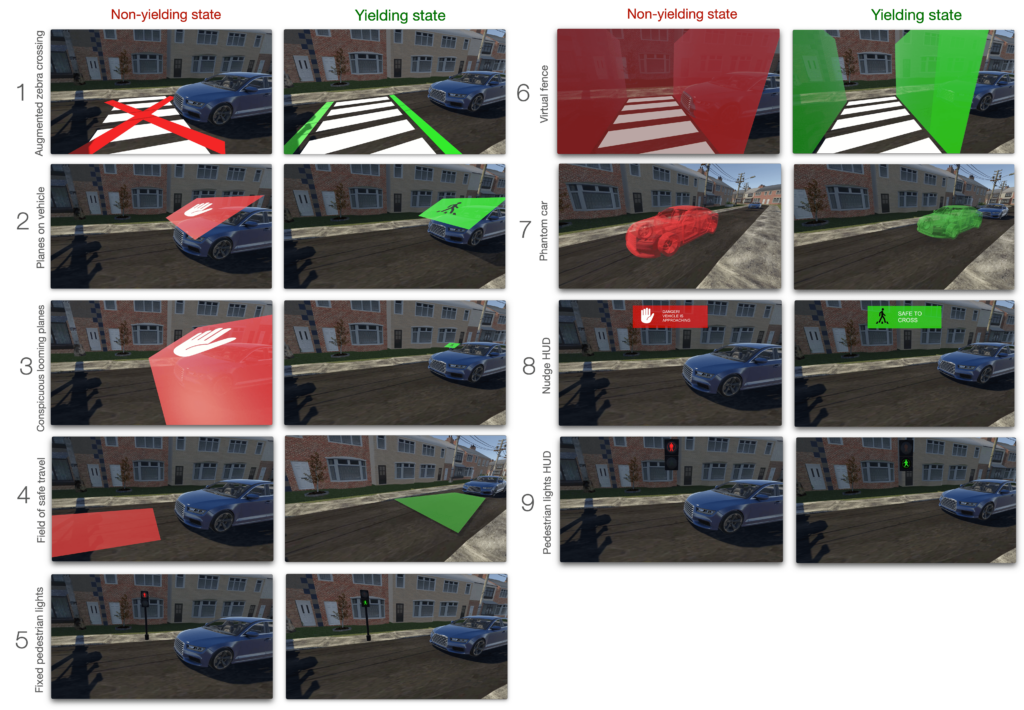

- The interfaces were based on expert perspectives extracted from Tabone, De Winter, et al. (2021a) and designed using theoretically-informed brainstorming sessions (see Figure. 1 for the interfaces). In total, nine AR interfaces were designed, each with a non-yielding and yielding state, depicted in red and green respectively. These colours were selected based on their high intuitiveness rating for signalling ‘please (do not) cross’ (Bazilinskyy et al., 2020).

- An AR heuristic evaluation was conducted on the proposed interfaces, which were subsequently implemented in AR using Unity, and demoed on an iPad Pro 2020 with LiDAR.

The following are the resultant nine AR interfaces to support pedestrian-vehicle interactions. Each interface had a non-yielding and yielding state:

| Interface | Description | Type |

| 1. Augmented zebra crossing | Traditional zebra crossing design with green strips indicating a vehicle will yield, and a red cross to indicate that a vehicle will not yield. | road-mapped |

| 2. Planes on vehicle | Plane attached to the windshield, with icons of an outstretched hand (non-yielding), and a crossing pedestrian (yielding). | vehicle-mapped |

| 3. Conspicuous looming planes | Same design as (2), but the planes grow (NY) and shrink (Y) in size as a vehicle approaches. Makes use of looming. | vehicle-mapped |

| 4. Field of safe travel | A protruding ‘tongue’ which demarcates if an area in front of a vehicle is a safe zone to cross. | vehicle-mapped |

| 5. Fixed pedestrian lights | Displays a traditional pedestrian light across the road. | road-mapped |

| 6. Virtual fence | Creates a virtual tunnel which encloses a zebra-crossing. A fence gate swings open in the yielding state. | road-mapped |

| 7. Phantom car | A translucent car which indicates the vehicle’s predicted future position. | vehicle-mapped |

| 8. Nudge HUD | A floating text message and icon which informed the pedestrian whether or not it was safe to cross. | heads-up display |

| 9. Pedestrian lights HUD | The pedestrian lights (5), but presented as a HUD. | heads-up display |

A pictorial and video demonstration of the interfaces is presented below (Figure 1, Video 1):

User evaluation: Online video questionnaire study

- The interfaces were evaluated in an online questionnaire video-based study which was completed by 992 respondents in Germany, the Netherlands, Norway, Sweden and the United Kingdom

- The nine interfaces were implemented in a VR environment (Figure 2) and videos of each interfaces operating in both states were created (Video 2).

- Interfaces were presented in random order, and each questionnaire page contained both a video of the non-yielding and yielding states. Each interface condition was then rated for:

- Intuitiveness and convincingness.

- Descriptor scale which asked participants to rate trigger speed, size, understandability, and aesthetic quality.

- At the end of each interface page, a 9-item acceptance scale (Van Der Laan et al., 1997) was used to collect further ratings on facets of usefulness and satisfaction. A free-text area was also added so that participants could elaborate further.

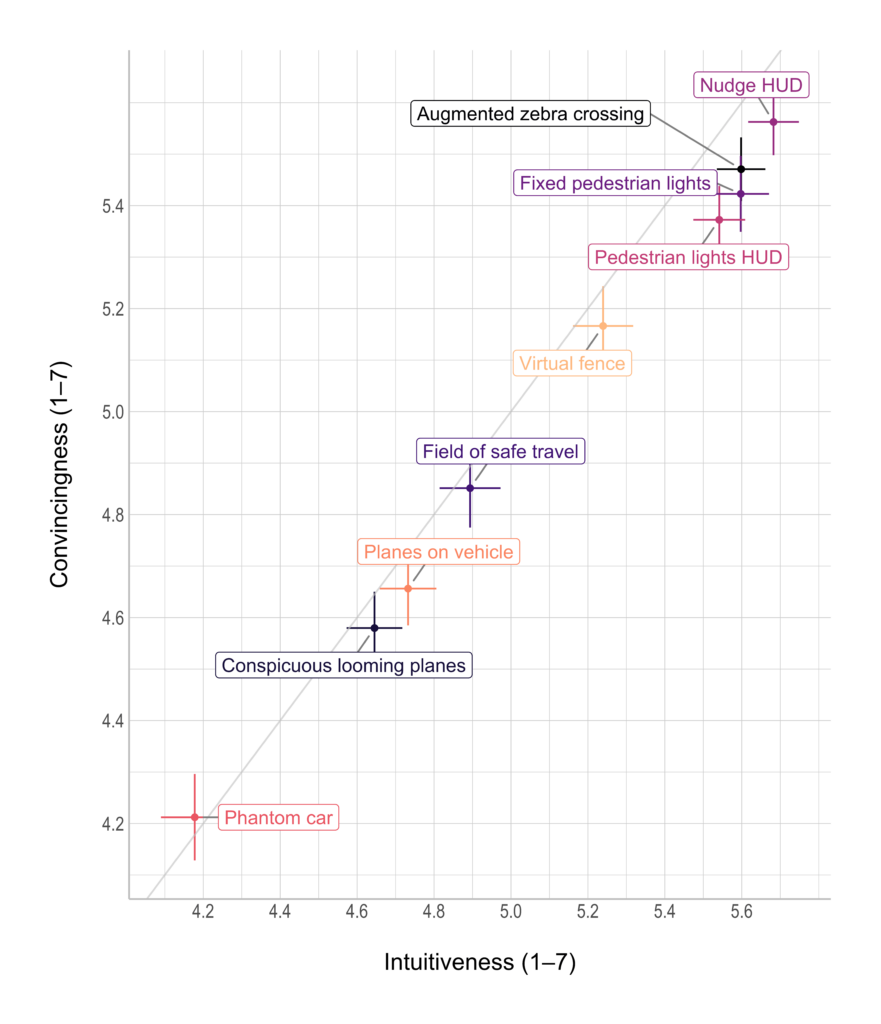

- The measured ratings were found to correlate highly (r = 0.998 just between intuitiveness and convincing).

- A composite score of the correlated items was calculated, with the interfaces attaining scores in the following order: Nudge HUD, Augmented zebra crossing, Fixed pedestrian lights, Pedestrian lights HUD, Virtual fence, Field of safe travel, Planes on vehicle, Conspicuous looming planes, and Phantom car.

- The same pattern can be seen in the scatter plot of convincingness against intuitiveness (Figure 3).

figure, ratings for the yielding and non-yielding states were averaged. The error bars represent 95% confidence intervals.

- The main outcomes of the online video-based study were:

- Respondents preferred head-locked interfaces over their world-locked counterpart.

- Interfaces employing traditional traffic elements received higher ratings than others. These results highlight the limitations of the ‘genius’ design method, and the importance of involving the user earlier in the process.

- Responses related to the general use of interfaces indicated a preference for interfaces that are mapped to the street instead of the vehicle.

- Most respondents also indicated that they would like to personalise the AR interfaces, and that communication using AR interfaces in future traffic would be useful.

The online study offered an indication of what kinds of AR interfaces, placement in the world, and design elements are more suitable for pedestrian-vehicle interactions, there were limitations related to the ecological validity dimension of the study. In order to better understand the behaviour of potential users of the system, the ecological validity of such a user evaluation was increased. Hence, certain elements of the user evaluation was replicated in a CAVE pedestrian-simulator experiment, which also looked at attention distribution of the participant (pedestrian).

User evaluation: Virtual reality simulator study

The simulator study information will be made available here once the paper is submitted for peer-review.

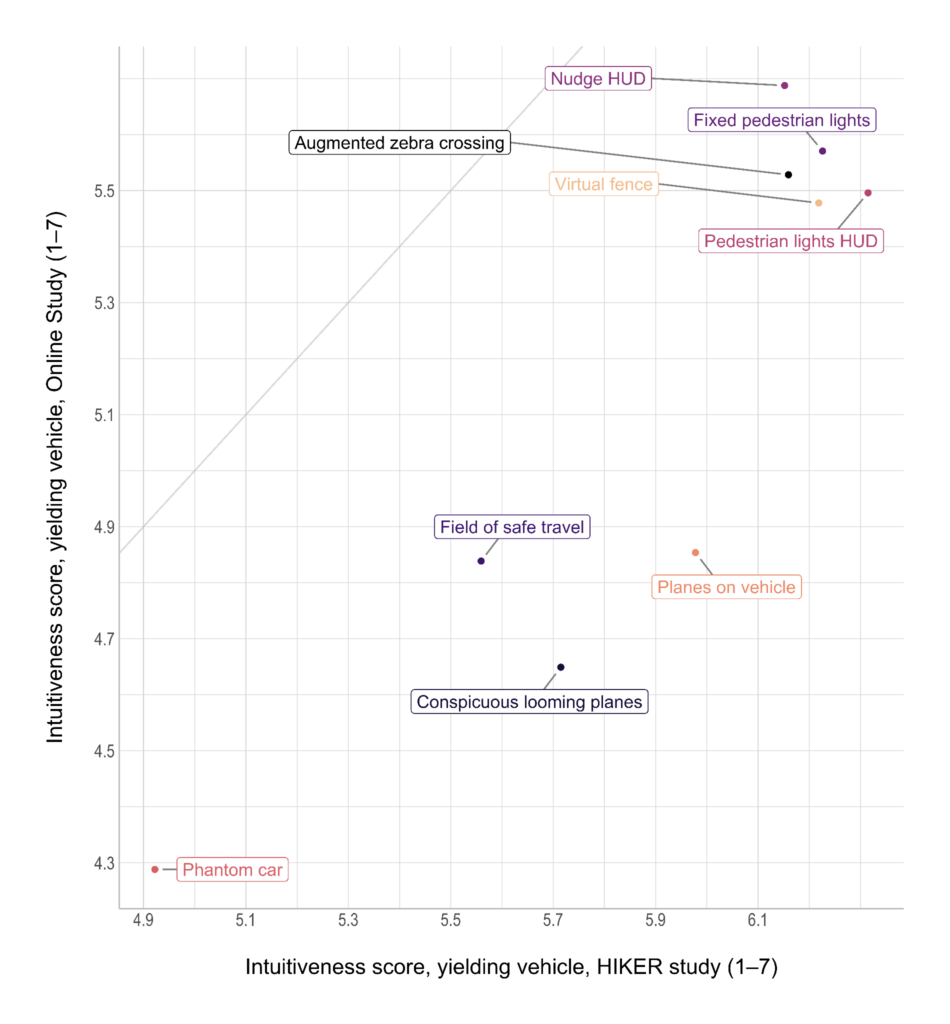

- The interfaces were evaluated by 30 participants in a CAVE pedestrian simulator.

- The study examined if the prior online evaluation of the AR interfaces could be replicated in an immersive virtual environment, and if AR design effectiveness depend on pedestrian attention allocation.

- To emulate visual distraction, participants had to look into an attention-attractor circle that disappeared 1 second after the AR interface appeared.

- Interfaces were rated on their intuitiveness, and participants were asked to cross the environment if they felt safe to do so.

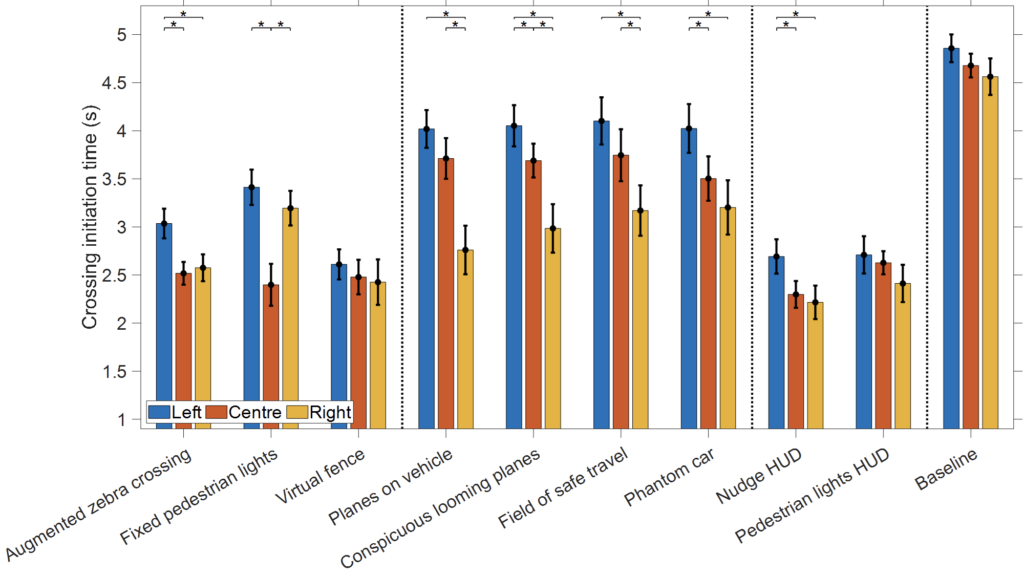

current HIKER experiment and the previous online questionnaire study (Tabone et al., 2023).

condition.

- Results indicated a strong correlation between the online study ratings, and the CAVE study (r ≈ 0.90). Based on these results, we contend that if the objective is solely to gather subjective evaluations, such as average intuitiveness ratings, preference rankings, an online questionnaire could not only be sufficient but even preferable, considering the potential for larger sample sizes

- Head-locked interfaces and familiar designs yielded higher intuitiveness scores and quicker crossing initiations than vehicle-locked interfaces (Figure 5).

- Vehicle-locked interfaces were less effective when the attention-attractor was on the environment’s opposite side, while head-locked interfaces were unaffected (Figure 6).

- The results highlight the importance of interface placement in relation to user’s gaze direction, and implications for placing eHMIs on the vehicle.

Implementation: Augmented reality interfaces in head-mounted displays

A number of the interfaces were displayed through an AR head-mounted display in a real-world outdoor environment. Next to the evaluation of the interface intuitiveness, trustworthiness, and overall acceptance, this study also offered insights into whether previous findings replicate in a real-world environment and how issues such as the pedestrians’ divided attention, proximity-compatibility of the interfaces, and egocentricity vs. allocentricity of the message, affect pedestrians’ willingness to cross.

As a final step, the concept of diminished reality (DR), where information is removed rather than added, was explored. Different DR solutions were tested in scenarios where visibility is compromised, such as when a parked vehicle obstructs the view. High local embeddedness of interfaces, where interfaces conformed better to the surrounding environment (e.g., a transparent vehicle mapping onto the actual vehicle), elicited higher local presence and were therefore found to be the most favourable to participants.

Impact

Researchers and policymakers regularly highlight the importance of standardisation of eHMI designs in terms of colours, modality (e.g., text vs. iconography), and signal type. The current proliferation of eHMI concepts, with OEMs creating their own distinct designs, is deemed problematic, as it would be overwhelming and consequently dangerous for road users to process various types of eHMIs at once.

My research focused on assessing the suitability of augmented reality (AR) technology for transparent and trustworthy pedestrian-AV interactions and the design of AR interfaces that promote intuitive communication between pedestrians and AVs. An important power of AR interfaces is that they are software-based and private to the user, and can, therefore, be customised. This could alleviate some of the challenges regarding the standardisation of vehicle-mounted eHMIs across different vehicle manufacturers, as the users may choose their preferred language, modality, and signal type and experience the same interface for every AV. A second supposed advantage of AR interfaces compared to traditional eHMIs is that the former are personal to the user, which can prevent the common ambiguous situation where an AV needs to communicate different messages to multiple pedestrians being present at different locations in the traffic scene.

The design of AR interfaces itself will require regulation and standardisation. For example, AR should not obstruct information from the real world and should not overload the user with information. Next to the investigation of AR interfaces, I conducted interviews with experts in the field. These interviews revealed a need for harmonisation of terminologies and definitions. Such homogenisation is deemed particularly important for policymaking, where the terminology used and associated definitions need to be interpreted in a consistent manner.

My publications related to SHAPE-IT

Journal

Peereboom, J*., Tabone, W*., Dodou, D., & De Winter J. C. F. (2024). Head-locked, world-locked, or conformal diminished-reality? An examination of different AR solutions for pedestrian safety in occluded scenarios. Virtual Reality. https://doi.org/10.1007/s10055-024-01017-9

Aleva, T.*, Tabone, W.*, Dodou, D., & De Winter, J. C. F. (2024). Augmented reality for supporting the interaction between pedestrians and automated vehicles: An experimental outdoor study. Frontiers in Robotics and AI, 11, 1324060. https://doi.org/10.3389/frobt.2024.1324060

Tabone, W., Happee, R., Yang, Y., Sadraei, E., García, J., Lee, Y. M., Merat, N., & De Winter J. C. F. (2024). Immersive Insights: Evaluating Augmented Reality Interfaces for Pedestrians in a CAVE-Based Experiment. Under review.

Tabone, W., & De Winter J. C. F. (2023). Using ChatGPT for human-computer interaction: A primer. Royal Society Open Science, 10, 231053. https://doi.org/10.1098/rsos.231053

Tabone, W., Happee, R., García, J., Lee, Y. M., Lupetti, L., Merat, N., & De Winter J. C. F. (2023). Augmented reality interfaces for pedestrian-vehicle interactions: An online study. Transportation Research Part F: Traffic Psychology and Behaviour, 94, 170-189. https://doi.org/10.1016/j.trf.2023.02.005

Tabone, W., De Winter, J. C. F., Ackermann, C., Bärgman, J., Baumann, M., Deb, S., Emmenegger, C., Habibovic, A., Hagenzieker, M., Hancock, P. A., Happee, R., Krems, J., Lee, J. D., Martens, M., Merat, N., Norman, D. A., Sheridan, T. B., & Stanton, N. A. (2021). Vulnerable road users and the coming wave of automated vehicles: Expert perspectives. Transportation Research Interdisciplinary Perspectives, 9, 100293. https://doi.org/10.1016/j.trip.2020.100293

Conference/Symposia

Block, A., Joshi, S., Tabone, W., Pandya A., Lee, S., Patil, V., Britten, N., & Schmitt, P. (2023). The Road Ahead: Advancing Interactions between Autonomous Vehicles, Pedestrians, and Other Road Users. Proceedings of the 32nd IEEE International Conference on Robot and Human Interactive Communication (RO-MAN). Busan, South Korea.

Joshi, S., Block, A., Tabone, W., Pandya A., & Schmitt, P. (2023). Advancing the State of AV-Vulnerable Road User Interaction: Challenges and Opportunities. Proceedings of the AAAI 2023 Spring Symposium. Palo Alto, California, United States.

Tabone, W., Lee, Y.M., Merat, N., Happee, R., & De Winter J.C.F., (2021). Towards future pedestrian-vehicle interactions: Introducing theoretically-supported AR prototypes. Proceedings of the 13th International Conference on Automotive User Interfaces and Interactive Vehicular Applications (pp. 209–218). Leeds, United Kingdom. https://doi.org/10.1145/3409118.3475149

Poster

Tabone, W., Happee, R., García De Pedro, J., Lee, Y. M., Lupetti, M. L., Merat, N., & De Winter, J. C. F. (2022). Augmented reality concepts for pedestrian-vehicle interactions: An online study. Poster session presented at 13th International Conference on Applied Human Factors and Ergonomics (AHFE 2022), New York, United States. https://research.tudelft.nl/en/publications/augmented-reality-concepts-for-pedestrian-vehicle-interactions-an

Tabone, W. (2022). Enhancing the transport IoT of the future: towards extended reality HMIs. Poster session presented at SHAPE-IT ScienceFest, Munich, Germany. https://research.tudelft.nl/en/publications/enhancing-the-transport-iot-of-the-future-towards-extended-realit

Tabone, W. (2021). Augmented reality for AV-VRU interactions. Poster presented at the 13th International Conference on Automotive User Interfaces and Interactive Vehicular Applications. https://www.shape-it.eu/wp-content/uploads/2021/09/ESR9_Wilbert_Poster.pdf

Conference talks

Tabone, W., Happee, R., García, J., Lee, Y. M., Lupetti, L., Merat, N., & De Winter J. C. F. (2023). Augmented reality interfaces for pedestrian-vehicle interactions: An online study. 7th International conference on traffic and transport psychology. Gothenburg, Sweden.

Project Deliverables

Figalová, N., Mbelekani, N.Y., Zhang, C., Yang, Y., Peng, C., Nasser, M., Yuan-Cheng, L., Pir Muhammad, A., Tabone, W., Berge, S. H., Jokhio, S., He, X., Hossein Kalantari, A., Mohammadi, A., & Yang, X. (2021). SHAPE-IT Deliverable 1.1: Methodological framework for modelling and empirical approaches. https://doi.org/10.17196/shape-it/2021/02/D1.1

Merat, N., Yang, Y., Lee, Y. M., Berge, S. H., Figalová, N., Jokhio, S., Peng, C., Mbelekani, N.Y., Nasser, M., Pir Muhammad, A., Tabone, W., Yuan-Cheng, L., & Bärgman, J. (2021). SHAPE-IT Deliverable 2.2: An overview of interfaces for automated vehicles (inside/outside). https://doi.org/10.17196/shape-it/2021/02/D2.1

Datasets

Peereboom, J., Tabone, W., Dodou, D., & De Winter J. C. F. (2024). Supplementary data for the paper ‘Head-locked, world-locked, or conformal diminished-reality? An examination of different AR solutions for pedestrian safety in occluded scenarios.’ [Dataset]. 4TU.ResearchData. https://doi.org/10.4121/e0fd7ca5-7cb6-4ce2-8d42-7a62e867e1d1.v1

Aleva, T., Tabone, W., Dodou, D., & De Winter, J. C. F. (2024). Supplementary data for the paper ‘Augmented reality for supporting the interaction between pedestrians and automated vehicles: An experimental outdoor study’. [Dataset]. 4TU.ResearchData. https://doi.org/10.4121/a1f9f15c-1213-4657-8e4d-a154a725d747.v1

Tabone, W., & De Winter, J. C. F. (2023). Supplementary materials for the article: Using ChatGPT for Human Computer Interaction Research: A Primer. [Data set]. 4TU.ResearchData. https://doi.org/10.4121/21916017.v1

Tabone, W., Lee, Y. M., Merat, N., Happee, R., & de Winter, J.C.F. (2021). Supplementary materials for the article: Towards future pedestrian-vehicle interactions: Introducing theoretically-supported AR prototypes. [Computer software]. 4TU.ResearchData. https://doi.org/10.4121/14933082.V1

Tabone, W., Happee, R., García De Pedro, J., Lee, Y. M., Lupetti, M. L., Merat, N., & de Winter, J.C.F. (2023). Supplementary materials for the article: Augmented reality interfaces for pedestrian-vehicle interactions: An online study. [Data set]. 4TU.ResearchData. https://doi.org/10.4121/21603678.V1

Outreach/Media

Gent, E. (2022). Making driverless cars more expressive: Exaggerated stopping movements help pedestrians read autonomous cars’ minds. https://spectrum.ieee.org/self-driving-car-safety-features

Tabone, W. (2021). Podcast on automated vehicles. Available at https://www.facebook.com/100067547438124/videos/139781804908165

Tabone, W. (2023). Self-driving cars: will they really happen? https://medium.com/@wiltabone/self-driving-cars-will-they-really-happen-f768ac21119

Tabone, W. (2023). Case Study: AR interfaces for automated vehicle-pedestrian interactions. https://medium.com/@wiltabone/self-driving-cars-will-they-really-happen-f768ac21119

Who am I?

I’m Wilbert Tabone from the island nation of Malta. I graduated BSc. (Hons.) with first class honours in Creative Computing from Goldsmiths, University of London and later read for an MSc in Artificial Intelligence at the University of Malta, conducting my research at the Bernoulli Institute for Mathematics, Computer Science and Artificial Intelligence, University of Groningen in the Netherlands.

Back home, I am actively involved in the cultural, technology and education sectors and serve as an activist for a number of Maltese and international NGOs, including the Commonwealth Youth Council, and the National Commission for the Promotion of Equality.

Previous to my PhD position, I was spearheading creative computing development in the Maltese heritage sector and formed part of the core team which developed the new Malta National Community Art Museum (MUŻA) that subsequently hosted the 2018 Network of European Museum Organizations (NEMO) conference. Furthermore, I have served as a quality assurance auditor for the Malta Further and Higher Education Authority (MFHEA). Lastly, I was also part of Malta.AI, the Malta National Task Force on Artificial Intelligence which was tasked with formulating Malta’s national strategy on AI. I have a keen interest in user experience design (UX), Artificial intelligence, digital cultural heritage, algorithmic computational art and generative design.

During my PhD, I have been actively involved in the Marie Curie ITN as an ESR representative (twice), organising the Delft meeting, assisting with the Leeds meeting, and also the final event in Gothenburg. At TU Delft, I formed part of the XR community, Open Science community, and sat on the board of the art association, which organised events and published an art magazine. Moreover, I was part of the team which developed a permanent artwork on campus, as commissioned by the executive board of the university.

My affiliation

Contact details of supervisors:

Prof. dr. ir. Joost C. F. de Winter: J.C.F.deWinter@tudelft.nl

Prof. dr. ir. Riender Happee: R.Happee@tudelft.nl

Contact information and links

References

Bazilinskyy, P., Dodou, D., & De Winter, J. C. F. (2020). External human-machine interfaces: Which of 729 colors is best for signaling ‘Please (do not) cross’? In IEEE international conference on systems, man and cybernetics (SMC) (pp. 3721–3728), Toronto, Canada. https://doi.org/10.1109/SMC42975.2020.9282998.

Tabone, W., De Winter, J. C. F., Ackermann, C., B¨argman, J., Baumann, M., Deb, S., Emmenegger, C., Habibovic, A., Hagenzieker, M., Hancock, P. A., Happee, R., Krems, J., Lee, J. D., Martens, M., Merat, N., Norman, D. A., Sheridan, T. B., & Stanton, N. A. (2021). Vulnerable road users and the coming wave of automated vehicles: Expert perspectives. Transportation Research Interdisciplinary Perspectives, 9, Article 100293. https://doi.org/10.1016/j.trip.2020.100293

Van Der Laan, J. D., Heino, A., & De Waard, D. (1997). A simple procedure for the assessment of acceptance of advanced transport telematics. Transportation Research Part C: Emerging Technologies, 5, 1–10. https://doi.org/10.1016/S0968-090X(96)00025-3